However, this 50 number appeared to be not enough either, the limit was reached: the pg_stat_progress_vacuum view displays the maximum of 50 rows.

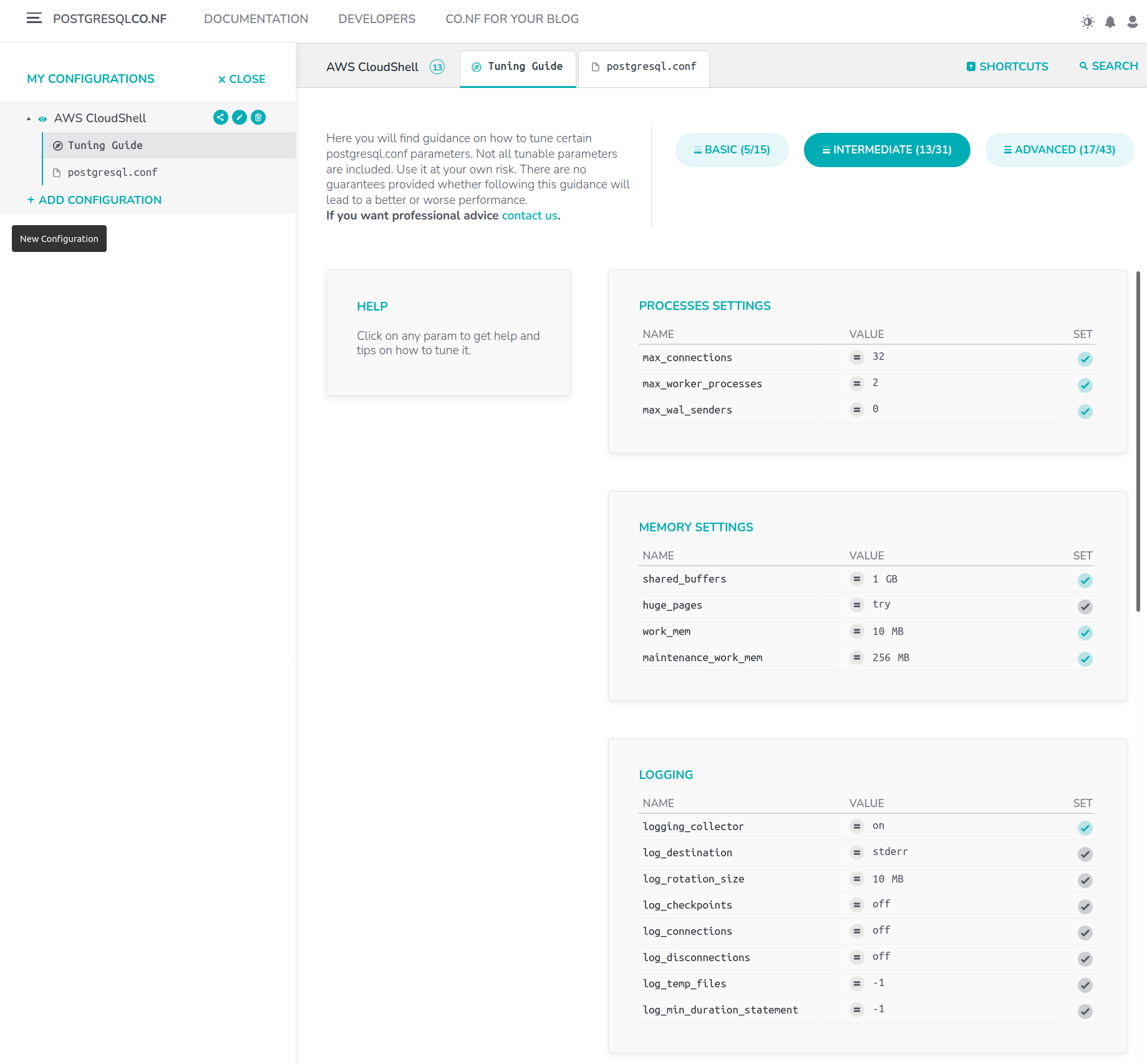

Sometimes this value could be set to, say, 10, but 50 was what our auditing team had never seen before. The autovacuum configurations were quite unusual: the autovacuum_max_workers parameter that limits the number of parallel autovacuum processes, was set to 50, while its default value is 3. Of course, Postgres documentation also covers it. To get more information about this notice, please read the essential blog post by Egor Rogov covering Autovacuum you can also refer to this material - it mentions this notice. Many of the autovacuum processes came with the “ to prevent wraparound ” notice. In our case, the Vacuum-related statistics section contained the most dreadful numbers:Īs you can see, the autovacuum process went through certain tables more than 20,000 times a day! (It this case, the report interval was set to half-day.) The number of autovacuum processes also reached the peak value. This utility pulls the samples of current statistics at configurable time intervals (say, half an hour) and aggregates data that is displayed in multi-section reports. pg_profile / pgpro_pwr accesses many system views including pg_stat_statements and works with pg_stat_kcache. We do this when it complies with the customer’s security policies). In addition to this, we install in the database either pg_profile open source utility or PWR (pgpro_pwr), its variant with enhanced functionality, both authored by Andrey Zubkov. information from pg_stat_statements, pg_stat_activity, pg_stat_all_tables.core dump of PostgreSQL processes done with gcore.stacktrace of PostgreSQL processes and their locks.the statistics on PostgreSQL processes performance pulled via perf.We visualize the received information in FlameGraph. Upon the customer’s request, we can download a custom pg_diagdump.sh script that executes perf subcommands to return files with sampling data. To do an audit, we normally use a set of open source and our own utilities. The tools we used to detect the issues have also been mentioned in this blog post. Their findings looked interesting to us, that’s why we decided to share them with our blog readers. This is why our support team decided to do the database audit. ZFS compresses these databases by half, now each of them occupies 90 TB of disc space, but the overall performance hasn’t changed significantly. However, the company’s analysts use the PostgreSQL databases, so the solution is business-critical. The stored data was collected from many other non-PostgreSQL databases. The customer’s Postgres databases were huge: each of the two databases was 180 TB in size. Surprisingly, PostgreSQL performance degraded compared to the times before the servers were bought. Only the HDD Fast10 with zil, truenas jail is the local jail.One of our company’s enterprise customers faced growth difficulties: the databases became huge, the needs changed, so the customer bought powerful NUMA servers and installed the ZFS file system they preferred (we call it “ZFS” for short, the official name is “OpenZFS”). SSD Fast10, 8G RAM, Sync Enabled, Atime off, 16K block. HDD Fast10 with zil, 8G RAM, Sync Enabled, Atime off, 16K block. SSD Fast10, 24G RAM, Sync Enabled, Atime off. HDD Fast10 with zil, 8G RAM, Sync Enabled, Atime off. HDD Fast10 with zil, 24G RAM, Sync Enabled, Atime off. Scaling Factor: 1 - Clients: 100 - Mode: Read Only - Average Latency: Scaling Factor: 1 - Clients: 50 - Mode: Read Only - Average Latency:

Scaling Factor: 1 - Clients: 50 - Mode: Read Only: Pts/pgbench-1.11.0 Įstimated Time To Completion: 4 Hours, 43 Minutes

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed